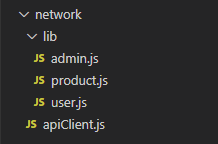

> 2021年04月09日信息消化 ### 每天学点机器学习 Stanford Machine Learning Week2 #### 设置你的编程作业环境 #### Setting Up Your Programming Assignment Environment Install Octave - "Warning: Do not install Octave 4.0.0" - Windows - Download: http://wiki.octave.org/Octave_for_Microsoft_Windows - **Octave on Windows can be used to submit programming assignments** in this course but will likely need a patch provided in the discussion forum. Refer to [https://www.coursera.org/learn/machine-learning/discussions/vgCyrQoMEeWv5yIAC00Eog?](https://www.coursera.org/learn/machine-learning/discussions/vgCyrQoMEeWv5yIAC00Eog?page=2) for more information about the patch for your version. - MacOS - Homebrew: `brew install octave` - Image: [the Octave 3.8.0 installer](http://sourceforge.net/projects/octave/files/Octave MacOSX Binary/2013-12-30 binary installer of Octave 3.8.0 for OSX 10.9.1 (beta)/GNU_Octave_3.8.0-6.dmg/download) - Linux - [using your system package manager to install Octave](http://wiki.octave.org/Octave_for_GNU/Linux). Matlab Web: https://matlab.mathworks.com/ 1. When you reach the first programming exercise page (at the end of Week 2), **DO NOT** download the exercise files. The files for *all* programming exercises are already included in the zip file above. 2. **DO** record the assignment token provided on the exercise page, you will need it to submit your solutions. 3. **DO** read the instructions for MATLAB users provided in the **README** file which will guide you through the process of completing the exercises using MATLAB. 4. **DO** check the **README** file first for tips and solutions to common problems if you experience any issues. #### Octave Resources At the Octave command line, typing **help** followed by a function name displays documentation for a built-in function. For example, **help plot** will bring up help information for plotting. Further documentation can be found at the Octave [documentation pages](http://www.gnu.org/software/octave/doc/interpreter/). #### MATLAB Resources At the MATLAB command line, typing help followed by a function name displays documentation for a built-in function. For example, help plot will bring up help information for plotting. Further documentation can be found at the MATLAB [documentation pages](http://www.mathworks.com/help/matlab/). #### Octave for macOS ``` octave --version ``` ##### Further reading http://wiki.octave.org/Octave_for_macOS#Package_Managers The default charting package in Octave is straight qt. However, on the Mac gnuplot often works better. To switch to gnuplot, place the following text in your **~/.octaverc** file: ``` setenv('GNUTERM','qt') graphics_toolkit("gnuplot") ``` #### Introduction to MATLAB with Onramp Made for MATLAB beginners or those looking for a quick refresh, the MATLAB Onramp is a 1-2 hour interactive introduction to the basics of MATLAB programming. **Octave users are also welcome to use Onramp** (requires creation of a free MathWorks account). To access Onramp: 1. If you don’t already have one, create a MathWorks account at: https://www.mathworks.com/mwaccount/register 2. Go to: https://matlabacademy.mathworks.com/ and click on the MATLAB Onramp button to start learning MATLAB! #### MATLAB Programming Tutorials These short tutorial videos introduce MATLAB and cover various programming topics used in the assignments. Feel free to watch some now and return to reference them as you work through the programming assignments. Many of the topics below are also covered in MATLAB Onramp. ***Indicates content covered in Onramp.** #### Get Started with MATLAB and MATLAB Online - [What is MATLAB?](https://youtu.be/WYG2ZZjgp5M)* - [MATLAB Variables](https://youtu.be/0w9NKt6Fixk)* - [MATLAB as a Calculator](https://youtu.be/aRSkNpCSgWY)* - [MATLAB Functions](https://youtu.be/RJp46UVQBic)* - [Getting Started with MATLAB Online](https://youtu.be/XjzxCVWKz58) - [Managing Files in MATLAB Online ](https://youtu.be/B3lWLIrYjC0) ##### Vectors - [Creating Vectors](https://youtu.be/R5Mnkrk9Mos)* - [Creating Uniformly Spaced Vectors](https://youtu.be/_zqTOV5yl8Y)* - [Accessing Elements of a Vector Using Conditions](https://youtu.be/8D04GW_foQ0)* - [Calculations with Vectors](https://youtu.be/VQaZ0TvjF0c)* - [Vector Transpose](https://youtu.be/vgRLwjHBmsg) ##### Visualization - [Line Plots](https://youtu.be/-hhJoveE4sY)* - [Annotating Graphs](https://youtu.be/JyovEGPSdoI)* - [Multiple Plots](https://youtu.be/fBx8EFuXFLM)* ##### Matrices - [Creating Matrices](https://youtu.be/qdTdwTh6jMo)* - [Calculations with Matrices](https://youtu.be/mzzJ9gnMrYE)* - [Accessing Elements of a Matrix](https://youtu.be/uWPHxpTuZRA)* - [Matrix Creation Functions](https://youtu.be/VPcbpVd_mPA)* - [Combining Matrices](https://youtu.be/ejTr3ekTTyA) - [Determining Array Size and Length](https://youtu.be/IF9-ffmxuy8) - [Matrix Multiplication](https://youtu.be/4hsx3bdNjGk) - [Reshaping Arrays](https://youtu.be/UQpDIHlFo8A) - [Statistical Functions with Matrices ](https://youtu.be/Y97W3_u7cM4) ##### MATLAB Programming - [Logical Variables](https://youtu.be/bRMg4GsFDQ8)* - [If-Else Statements](https://youtu.be/JZSuU-Laigo)* - [Writing a FOR loop](https://youtu.be/lg65bzgvI5c)* - [Writing a WHILE Loop ](https://youtu.be/PKH5lCMJXbk) - [Writing Functions](https://youtu.be/GrcNN04eqXU) - [Passing Functions as Inputs ](https://youtu.be/aNCwR9dRjHs) ### 其他值得阅读 #### Clean Axios API调用 原文:[How To Write Clean API Calls With Axios](https://betterprogramming.pub/how-to-write-clean-api-calls-with-axios-ddbc7df4256c) ##### 目录结构 | Folder Structure  ##### 使用拦截器进行Clean重定向 | Using Interceptors for Clean Redirects ```js // apiClient.js const axiosClient = axios.create({ baseURL: `https://api.example.com`, headers: { 'Accept': 'application/json', 'Content-Type': 'application/json' } }); axiosClient.interceptors.response.use( function (response) { return response; }, function (error) { let res = error.response; if (res.status == 401) { window.location.href = “https://example.com/login”; } console.error(“Looks like there was a problem. Status Code: “ + res.status); return Promise.reject(error); } ); ``` ##### API Handler ```js import { axiosClient } from "../apiClient"; export function getProduct(){ return axiosClient.get('/product'); } export function addProduct(data){ return axiosClient.post('/product', JSON.stringify(data)); } ``` ##### 在APP中使用Handlers ```js import { getProduct } from "../network/lib/product"; getProduct() .then(function(response){ // Process response and // Do something with the UI; }); ``` #### 为什么智能可能比我们想象的更简单 原文:[Why Intelligence might be simpler than we think](https://towardsdatascience.com/why-intelligence-might-be-simpler-than-we-think-1d3d7feb5d34) ##### 统一的脑功能理论 | A unified theory of brain function Jeff Hawkins在他的《论智能》一书中抱怨说,人们对大脑的普遍看法是由高度专业化的区域组成。事实上,我们观察到新皮层的解剖结构有惊人的同质性。神经可塑性表明,大多数脑区可以轻松地承担以前由其他脑区承担的任务,这表明其设计原则背后具有一定的普遍性。 > In his book ***On Intelligence\***, Jeff Hawkins complains that the prevalent picture of the brain is as being composed of highly specialized regions. > > In fact, we observe that there is a surprising homogeneity in the anatomy of the neocortex. ***Neuroplasticity\*** indicates that most brain regions can easily take on tasks previously carried out by other brain regions, showing a certain universality behind their design principles. 在Norman Doidge的畅销书《改变自己的大脑》中,讲述了令人印象深刻的病人将整个感觉系统重新映射到大脑新的部分的故事,比如人们通过将摄像头记录的视觉刺激直接映射到嘴里的感觉刺激,从而学会用舌头看东西。 > In his bestseller **The Brain that Changes Itself**, Norman Doidge tells impressive stories of patients remapping entire sensory systems to new parts of the brain, like people learning to see with their tongue by mapping visual stimuli recorded with a camera to sensory stimuli straight into their mouth. 可塑性和灵活学习的事实可以解释为,根据我们基因中信息的稀少性,指向新皮层的生物设置和其运作的学习算法中的普遍结构。 > The fact of plasticity and flexible learning can be interpreted as pointing, in accord with the sparsity of information in our genes, towards a universal structure underlying both in the biological setup of the neocortex and the learning algorithms with which it operates. ##### 思想结构 | The structure of thought 正如雷-库兹韦尔在他的《如何创造思想》一书中所解释的那样,我们是以一种层次化的方式来感知世界的,由简单的模式组成,复杂程度不断提高。根据他的说法,模式识别构成了所有思维的基础,从最原始的模式到高度抽象和复杂的概念。 > As Ray Kurzweil explains in his book **How to Create a Mind**, we perceive the world in a hierarchical manner, composed of simple patterns increasing in complexity. According to him, pattern recognition forms the foundation of all thought, from the most primitive patterns up to highly abstract and complex concepts. ##### 模式识别的生物学 | The biology of pattern recognition 现代神经影像学数据表明,新皮层是由统一的各种结构组成,称为皮质柱。每一个都是由大约100个神经元建立起来的。 库兹韦尔提出,这些列形成了他所谓的最小模式识别器。通过将一层又一层的模式识别器相互连接起来,建立了一个概念层次,每个模式识别器专门从许多不同的可能的感觉模式(如眼睛、耳朵、鼻子)的输入中识别一个单一的模式。 在基本特征提取的基础上(如检测视觉刺激中的边缘或识别音调),这些模式堆叠起来形成更多更复杂的模式。 > Modern neuroimaging data indicates that the neocortex is composed of a uniform assortment of structures called cortical columns. Each one is built up from around 100 neurons. > Kurzweil proposes that these columns form what he calls minimal pattern recognizers. A conceptual hierarchy is created by connecting layers upon layers of pattern recognizers with each other, each specialized in recognizing a single pattern from the input of one of many different possible sensory modalities (like the eyes, the ears, the nose). > Building upon basic feature extractions (like detecting edges in visual stimuli or recognizing a tone), these patterns stack up to form more and more intricate patterns. 模式识别器并不局限于处理视觉或听觉刺激。它可以处理各种信号作为输入,根据输入中包含的结构产生输出。学习意味着对模式识别器进行布线,学习它们的权重结构(基本上是它们对彼此输入的反应有多强,彼此之间的相互联系有多大),类似于学习神经网络时的做法。 > A pattern recognizer is not bound to, say, processing visual or auditory stimuli. It can process all kinds of signals as inputs, generating outputs based on structures contained in the inputs. Learning means wiring up pattern recognizers and learning their weight structure (basically how strong they respond to each other’s input and how much they are interconnected among each other), similar to what is done when learning neural networks. ##### 信息的作用 | The role of information 神经元的输入有很多统一性,是神经计算的基础货币。无论大脑在处理哪种信号,总是由神经元的空间和时间发射模式组成。我们在外界观察到的每一种模式,在我们的感觉器官中都会被编码成神经发射模式,然后按照库兹韦尔的说法,这些模式在模式识别器的层级中上下流动,直到意义被成功提取出来。 神经科学的证据得到了计算机科学的思想支持。佩德罗-多明戈斯在他的《The Master Algorism》一书中提出,我们可能会找到一种通用的算法,只要给我们合适的数据,就可以让我们学习几乎所有我们能想到的东西。 **这种通用的学习算法甚至可能由已经存在的学习算法(如贝叶斯网络、连接主义或符号主义方法、进化算法、支持向量机等)混合组成。** > There is much uniformity in the input to neurons, the underlying currency of neural computation. Whichever signal the brain is processing, it is always composed of spatial and temporal firing patterns of neurons. Every kind of pattern we observe in the outside world is encoded in our sense organs into neural firing patterns, which then, according to Kurzweil, flow upwards and downwards the hierarchy of pattern recognizer until meaning is successfully extracted. > The neuroscientific evidence is supported by ideas from computer science. In his book **The Master Algorithm**, Pedro Domingos proposes that we might find a universal algorithm that would, given the right data, allow us to learn pretty much anything we could think of. > **This universal learning algorithm may even be composed of a mix of already existing learning algorithms (like Bayesian networks, connectionist or symbolist approaches, evolutionary algorithms, support vector machines, etc.).** 当你从300x300像素的图片中学习识别一张脸时,你有90000个像素包含信息,但如果你知道通常构成一张脸的东西,一张脸可以由少得多的信息(例如相关的特征,如眼睛的距离、嘴的宽度、鼻子的位置等)来描述。 例如在一些深度生成模型中就使用了这种想法,比如Autoencoders(我在《如何让计算机做梦》一文中写得比较详细),在这些模型中,数据的潜伏的、较低维度的表示被学习,然后用来生成较高维度的、看起来很真实的输出。 > When you learn to recognize a face from pictures with 300x300 pixels, you have 90000 pixels containing information, but a face can, if you know what usually makes up a face, be characterized by much less information (e.g. the relevant features like distance of the eyes, the width of the mouth, the position of the nose, etc.). > > This idea is used for instance in some deep generative models like Autoencoders (as I wrote in more detail on my article on How To Make Computers Dream), where latent, lower-dimensional representations of the data are learned and then used to generate higher-dimensional, realistic-looking output. ##### 为什么智能可能比我们想象的更简单 | Why intelligence might be simpler than we think 在我们 "解决 "智能之前,还有很多问题需要解决。正如Yann LeCun在这里指出的那样,推断因果关系或一般常识性的知识结构是一个大问题,而在算法中建立世界的预测模型(我在《贝叶斯大脑假说》一文中详细阐述),很可能是许多更多必要步骤中必要的下一步。 Jürgen Schmidthuber将科学的进步比作寻找越来越有效的压缩算法。牛顿和爱因斯坦出色地没有提出大而难懂的公式,而是用可以写成一行的方程来表达一系列不可思议的现象。Schmidthuber认为,这种超强的压缩性在某一时刻可能也会对一般学习者实现。 > There are many questions to address before we have “solved” intelligence. Inferring causality or general, common sense knowledge structures, as Yann LeCun points out here, is a big issue, and building predictive models of the world into the algorithms (as I go into at length in my article on The Bayesian Brain Hypothesis) is very probably a necessary next step out of many more necessary steps. > > Jürgen Schmidthuber likens the progress of science to finding ever-more efficient compressing algorithms: Newton and Einstein brilliantly managed not to come up with large and incomprehensible formulas, but rather they expressed an incredible range of phenomena by equations that could be written in one line. Schmidthuber thinks that this kind of ultra-compression might at one point also come true for general learners. #### Self-hosted 原文:[Screw it, I’ll host it myself](https://www.markozivanovic.com/screw-it-ill-host-it-myself/) - Gitea - Nextcloud - personal CRM & PM - Monica CRM - Kanboard - Development tools & Analytics - [Plausible](https://plausible.io/) ### 一点收获 - [Do you have FOBO? (fear of a better option)](https://www.youtube.com/watch?v=_zn-7m1Yn-0) - DECIDE Framework - **D**efine the problem - **E**stablish the criteria - **C**onsider the alternatives - **I**dentify the choice - **D**evelop a plan of action - **E**valuate the solution