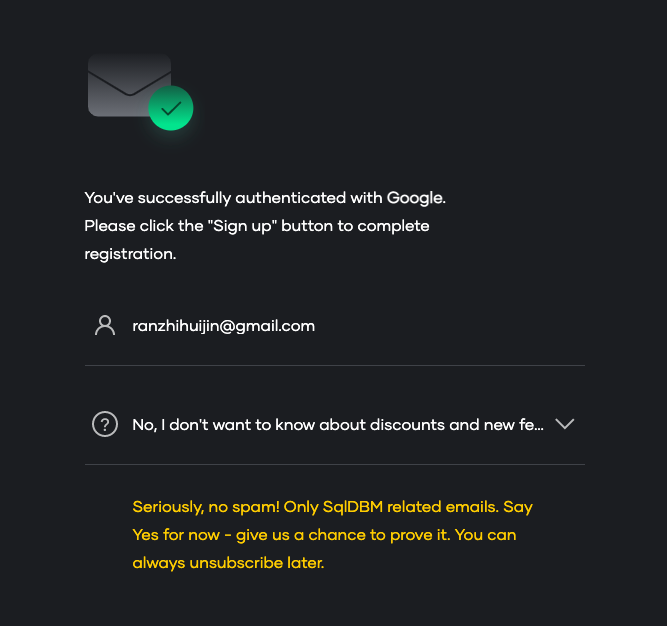

> 2021年04月22日信息消化 ### 每天学点机器学习 #### Machine Learning: Programming Exercise 1 ##### Recap - warmUpExercise.m - plotData.m - computeCost.m - gradientDescent.m ##### Submission Progress ``` == == Part Name | Score | Feedback == --------- | ----- | -------- == Warm-up Exercise | 10 / 10 | Nice work! == Computing Cost (for One Variable) | 40 / 40 | Nice work! == Gradient Descent (for One Variable) | 50 / 50 | Nice work! == Feature Normalization | 0 / 0 | Nice work! == Computing Cost (for Multiple Variables) | 0 / 0 | == Gradient Descent (for Multiple Variables) | 0 / 0 | == Normal Equations | 0 / 0 | == -------------------------------- == | 100 / 100 | ``` ##### Feature Normalization ###### Prepare: Load Data ```matlab % Load Data data = load('ex1data2.txt'); X = data(:, 1:2); y = data(:, 3); m = length(y); % Print out some data points % First 10 examples from the dataset fprintf(' x = [%.0f %.0f], y = %.0f \n', [X(1:10,:) y(1:10,:)]'); % Scale features and set them to zero mean [X, mu, sigma] = featureNormalize(X); ``` ###### FeatureNormalize Implemention [https://github.com/aqibsaeed/Matlab-ML/blob/master/Linear%20Regression/featureNormalize.m](https://github.com/aqibsaeed/Matlab-ML/blob/master/Linear Regression/featureNormalize.m) ```matlab function [X_norm, mu, sigma] = featureNormalize(X) X_norm = X; mu = zeros(1, size(X, 2)); sigma = zeros(1, size(X, 2)); for i = 1:size(X, 2) mu(i) = mean(X(:,i)); sigma(i) = std(X(:,i)); %X_norm(:,i) = X_norm(:,i) - mu(i); %X_norm(:,i) = X_norm(:,i) / sigma(i); X_norm(:,i) = (X_norm(:,i) - mu(i)) ./ sigma(i); end en ``` Other Implementation https://github.com/mGalarnyk/datasciencecoursera/blob/master/Stanford_Machine_Learning/Week2/Assignment/Matlab/featureNormalize.m ```matlab mu=mean(X); sigma=std(X); X_norm=(X-mu)./sigma; ``` ##### Gradient Descent ```matlab % Run gradient descent % Choose some alpha value alpha = 0.1; num_iters = 400; % Init Theta and Run Gradient Descent theta = zeros(3, 1); ``` ##### Add the bias term Now that we have normailzed the features, we again add a column of ones corresponding to to the data matrix X. ```matlab % Add intercept term to X X = [ones(m, 1) X]; ``` ```matlab [theta, ~] = gradientDescentMulti(X, y, theta, alpha, num_iters); % Display gradient descent's result fprintf('Theta computed from gradient descent:\n%f\n%f\n%f',theta(1),theta(2),theta(3)) ``` ※多元和一元线性的梯度下降和成本方程的计算一样。 - 梯度下降 : - ````matlab for iter = 1:num_iters theta = theta - (alpha/m) * (X' * (X * theta- y)); J_history(iter) = computeCostMulti(X, y, theta); end ```` - 正态方程: `theta=pinv((X')*X)*((X')*y);` #### Week3 Classification ##### Logistic Regression ### 每天学点算法 这个问题是由微软提出的。 假设一个算术表达式是以二进制树的形式给出的。每个叶子是一个整数,每个内部节点是 "+"、"-"、"∗"或"/"中的一个。 给出这样一棵树的根,写一个函数来评估它。 例如,给定下面的树。 > This problem was asked by Microsoft. > > Suppose an arithmetic expression is given as a binary tree. Each leaf is an integer and each internal node is one of '+', '−', '∗', or '/'. > > Given the root to such a tree, write a function to evaluate it. > > For example, given the following tree: ``` * / \ + + / \ / \ 3 2 4 5 ``` You should return 45, as it is (3 + 2) * (4 + 5). ```python class Node: def __init__(self, val): self.val = val self.left = None self.right = None def solve_graph(root): if root.val.isnumeric(): return float(root.val) return eval("{} {} {}".format(solve_graph(root.left), root.val, solve_graph(root.right))) d = Node("3") e = Node("2") f = Node("4") g = Node("5") b = Node("+") b.left = d b.right = e c = Node("+") c.left = f c.right = g a = Node("*") a.left = b a.right = c assert solve_graph(a) == 45 assert solve_graph(c) == 9 assert solve_graph(b) == 5 assert solve_graph(d) == 3 ``` ### 其他值得阅读 ### 一点收获 - **We share a Planet**. -- [Shawn Lukas](https://shawnlukas.com/) - 关于UX的设计,在注册https://sqldbm.com/时,遇到了目前为止最真真诚的是否订阅更新选项:当选择不订阅时,会出现黄色部分提示。被说服了。  然后发现免费计划只能生成一个表的SQL,收费计划门槛略高,弃了。还是继续用dbdiagram.io。 - 查看自己的手机号与邮件是否泄漏:[';--have i been pwned?](https://haveibeenpwned.com/) - Cloudflare 推出 的 serverless 服务 [Workers](https://developers.cloudflare.com/workers/) 还挺好用的。