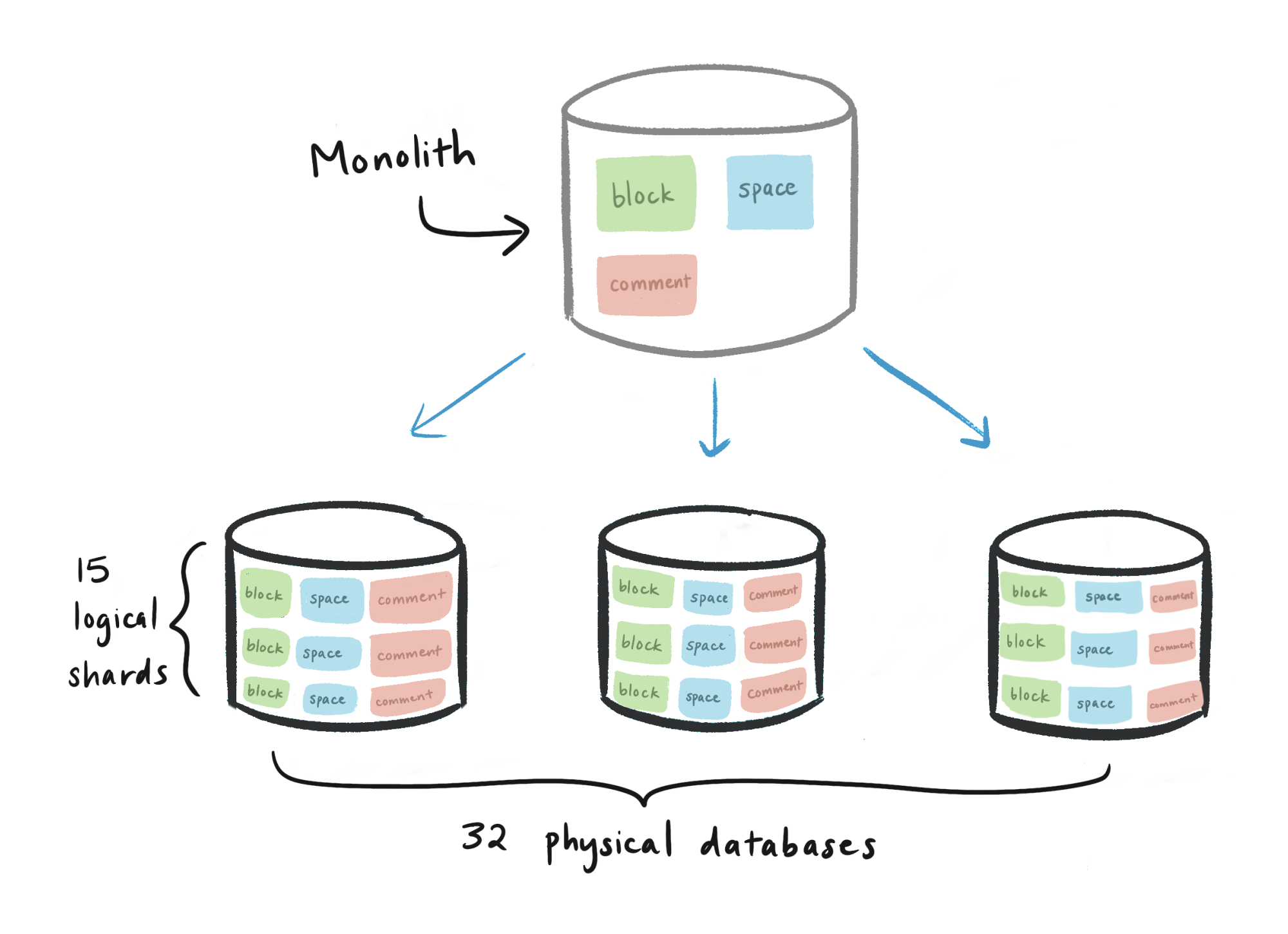

> 2021年10月22日信息消化 ### Herding elephants: Lessons learned from sharding Postgres at Notion origin: [Herding elephants: Lessons learned from sharding Postgres at Notion](https://www.notion.so/blog/sharding-postgres-at-notion) > The shard nomenclature is [thought to originate](https://www.raphkoster.com/2009/01/08/database-sharding-came-from-uo/) from the MMORPG [Ultima Online](https://uo.com/), when the game developers needed an in-universe explanation for the existence of multiple game servers running parallel copies of the world. Specifically, each shard emerged from a shattered crystal through which the evil wizard Mondain had previously attempted to seize control of the world. > 碎片命名法[被认为起源于](https://www.raphkoster.com/2009/01/08/database-sharding-came-from-uo/)MMORPG[Ultima Online](https://uo.com/),当时游戏开发者需要一个宇宙中的解释,来说明存在多个运行世界平行副本的游戏服务器。具体来说,每个碎片都是从一个破碎的水晶中出现的,邪恶的巫师蒙丹曾试图通过这个水晶来控制这个世界。 ##### Deciding when to shard Sharding represented a major milestone in our ongoing bid to improve application performance. Over the past few years, it’s been gratifying and humbling to see more and more people adopt Notion into every aspect of their lives. And unsurprisingly, all of the new company wikis, project trackers, and Pokédexes have meant billions of new [blocks, files, and spaces](https://www.notion.so/blog/data-model-behind-notion) to store. By mid-2020, it was clear that product usage would surpass the abilities of our trusty Postgres monolith, which had served us dutifully through five years and four orders of magnitude of growth. Engineers on-call often woke up to database CPU spikes, and simple [catalog-only migrations](https://medium.com/paypal-tech/postgresql-at-scale-database-schema-changes-without-downtime-20d3749ed680) became unsafe and uncertain. 分片是我们不断提高应用程序性能的一个重要里程碑。在过去的几年里,看到越来越多的人将Notion应用到他们生活的方方面面,我们感到很欣慰和谦卑。不足为奇的是,所有新的公司维基、项目跟踪器和Pokédexes都意味着有数十亿的新块、文件和空间需要存储。到2020年中期,很明显,产品的使用量将超过我们可信赖的Postgres单体的能力,它在五年的时间里尽职尽责地为我们提供了四个数量级的增长。随叫随到的工程师们经常被数据库的CPU峰值惊醒,简单的纯目录迁移变得不安全和不确定。 When it comes to sharding, fast-growing startups must navigate a delicate tradeoff. During the aughts, an influx of blog posts [expounded](https://www.percona.com/blog/2009/08/06/why-you-dont-want-to-shard/) [the](http://www.37signals.com/svn/posts/1509-mr-moore-gets-to-punt-on-sharding#) [perils](https://www.drdobbs.com/errant-architectures/184414966) [of](https://www.infoworld.com/article/2073449/think-twice-before-sharding.html) sharding prematurely: increased maintenance burden, newfound constraints in application-level code, and architectural path dependence.¹ Of course, at our scale sharding was inevitable. The question was simply when. 谈到分片,快速增长的初创公司必须进行微妙的权衡。在上世纪80年代,大量的博客文章阐述了过早进行分片的危险:增加维护负担,在应用级代码中发现新的约束,以及架构路径依赖¹当然,在我们的规模下,分片是不可避免的。当然,在我们的规模下,分片是不可避免的,问题只是在什么时候。 For us, the inflection point arrived when the Postgres `VACUUM` process began to stall consistently, preventing the database from reclaiming disk space from dead tuples. While disk capacity can be increased, more worrying was the prospect of [transaction ID (TXID) wraparound](https://blog.sentry.io/2015/07/23/transaction-id-wraparound-in-postgres), a safety mechanism in which Postgres would stop processing all writes to avoid clobbering existing data. Realizing that TXID wraparound would pose an existential threat to the product, our infrastructure team doubled down and got to work. 对我们来说,当Postgres的VACUUM进程开始持续停滞时,拐点就出现了,它阻止了数据库从死亡图元中回收磁盘空间。虽然磁盘容量可以增加,但更令人担忧的是交易ID(TXID)缠绕的前景,这是一种安全机制,Postgres会停止处理所有的写操作,以避免破坏现有的数据。意识到TXID包裹将对产品构成生存威胁,我们的基础设施团队加倍努力,开始工作。 ##### Designing a sharding scheme If you’ve never sharded a database before, here’s the idea: instead of vertically scaling a database with progressively heftier instances, *horizontally* scale by partitioning data across multiple databases. Now you can easily spin up additional hosts to accommodate growth. Unfortunately, now your data is in multiple places, so you need to design a system that maximizes performance and consistency in a distributed setting. 如果你以前从未使用过分片数据库,那么想法是这样的:与其用逐渐变大的实例来垂直扩展数据库,不如通过在多个数据库之间划分数据来进行水平扩展。现在你可以很容易地旋转额外的主机来适应增长。不幸的是,现在你的数据在多个地方,所以你需要设计一个系统,在分布式环境中最大限度地提高性能和一致性。 > Why not just keep **scaling vertically**? As we found, playing Cookie Clicker with the RDS “Resize Instance” button is not a viable long-term strategy — even if you have the budget for it. **Query performance and upkeep processes often begin to degrade well before a table reaches the maximum hardware-bound size**; our stalling Postgres auto-vacuum was an example of this soft limitation. ##### Application-level sharding We decided to implement our own partitioning scheme and route queries from application logic, an approach known as **application-level sharding**. During our initial research, we also considered packaged sharding/clustering solutions such as [Citus](https://www.citusdata.com/) for Postgres or [Vitess](https://vitess.io/) for MySQL. While these solutions appeal in their simplicity and provide cross-shard tooling out of the box, the actual clustering logic is opaque, and we wanted control over the distribution of our data.² 我们决定实施我们自己的分区方案,并从应用程序逻辑中路由查询,这种方法被称为应用级分片。在最初的研究中,我们也考虑过打包的分片/集群解决方案,如Citus for Postgres或Vitess for MySQL。虽然这些解决方案以其简单性吸引人,并提供了开箱即用的跨分片工具,但实际的集群逻辑是不透明的,我们希望能控制我们的数据分布。 Application-level sharding required us to make the following design decisions: - **What data should we shard?** Part of what makes our data set unique is that the `block` table reflects [trees of user-created content](https://www.notion.so/blog/data-model-behind-notion), which can vary wildly in size, depth, and branching factor. A single large enterprise customer, for instance, generates more load than many average personal workspaces combined. We wanted to only shard the necessary tables, while preserving locality for related data. 我们决定实施我们自己的分区方案,并从应用程序逻辑中路由查询,这种方法被称为应用级分片。在最初的研究中,我们也考虑过打包的分片/集群解决方案,如Citus for Postgres或Vitess for MySQL。虽然这些解决方案以其简单性吸引人,并提供了开箱即用的跨分片工具,但实际的集群逻辑是不透明的,我们希望能控制我们的数据分布。 - **How should we partition the data?** Good partition keys ensure that tuples are uniformly distributed across shards. The choice of partition key also depends on application structure, since distributed joins are expensive and transactionality guarantees are typically limited to a single host. **我们应该如何对数据进行分区?**好的分区键可以确保图元在分片间均匀分布。分区键的选择也取决于应用结构,因为分布式连接很昂贵,交易性保证通常只限于单个主机。 - **How many shards should we create? How should those shards be organized?** This consideration encompasses both the number of logical shards per table, and the concrete mapping between logical shards and physical hosts. **我们应该创建多少个分片?这些分片应该如何组织?**这种考虑既包括每个表的逻辑分片的数量,也包括逻辑分片和物理主机之间的具体映射。 ##### Decision 1: Shard all data transitively related to block Since Notion’s [data model](https://www.notion.so/blog/data-model-behind-notion) revolves around the concept of a block, each occupying a row in our database, the `block` table was the highest-priority for sharding. However, a block may reference other tables like `space` (workspaces) or `discussion` (page-level and inline discussion threads). In turn, a `discussion` may reference rows in the `comment` table, and so on. 由于Notion的数据模型围绕着块的概念,每个块在我们的数据库中占据一行,所以块表是分片的最高优先级。然而,一个区块可以引用其他表,如空间(工作空间)或讨论(页面级和内联讨论线程)。反过来,讨论可能会引用评论表中的行,以此类推。 We decided to shard **all tables reachable from the** **`block`** **table** via some kind of foreign key relationship. Not all of these tables needed to be sharded, but if a record was stored in the main database while its related block was stored on a different physical shard, we could introduce inconsistencies when writing to different datastores. 我们决定将所有可以通过某种外键关系从块表到达的表分片。并非所有这些表都需要分片,但如果一条记录存储在主数据库中,而其相关的块存储在不同的物理分片上,我们就会在向不同的数据存储写入时引入不一致的情况。 > For example, consider a block stored in one database, with related comments in another database. If the block is deleted, the comments should be updated — but since transactionality guarantees only apply within each datastore, the block deletion could succeed while the comment update fails. > > 例如,考虑一个存储在一个数据库中的块,在另一个数据库中具有相关的注释。如果块被删除,评论应该被更新——但由于事务性保证只适用于每个数据存储,当评论更新失败时,块删除可能会成功。 ##### Decision 2: Partition block data by workspace ID Once we decided which tables to shard, we had to divide them up. Choosing a good partition scheme depends heavily on the distribution and connectivity of the data; since Notion is a team-based product, our next decision was to **partition data by workspace ID**.³ 一旦我们决定了哪些表要分片,我们就必须把它们划分开来。选择一个好的分区方案在很大程度上取决于数据的分布和连接性;由于Notion是一个基于团队的产品,我们的下一个决定是按工作区ID划分数据。 Each workspace is assigned a UUID upon creation, so we can partition the UUID space into uniform buckets. Because each row in a sharded table is either a block or related to one, and **each block belongs to exactly one workspace**, we used the workspace ID as the *partition key*. Since users typically query data within a single workspace at a time, we avoid most cross-shard joins. 一旦我们决定了哪些表要分片,我们就必须把它们划分开来。选择一个好的分区方案在很大程度上取决于数据的分布和连接性;由于Notion是一个基于团队的产品,我们的下一个决定是按工作区ID划分数据。 ##### Decision 3: Capacity planning > sharding postgres: "would you rather fight 1 user making 1M requests or 1M users making 1 request each" @Nintendo .DS_Store Having decided on a partitioning scheme, our goal was to design a sharded setup that would handle our existing data *and* scale to meet our two-year usage projection with low effort. Here were some of our constraints: 在决定了分区方案之后,我们的目标是设计一个分片设置,以处理我们现有的数据,并以较低的努力来满足我们两年的使用预测。以下是我们的一些限制。 - **Instance type:** Disk I/O throughput, quantified in [IOPS](https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/CHAP_Storage.html), is limited by both AWS instance type as well as disk volume. We needed at least 60K total IOPS to meet existing demand, with the capacity to scale further if needed. 实例类型。磁盘I/O吞吐量,以IOPS量化,受到AWS实例类型和磁盘容量的限制。我们需要至少60K的总IOPS来满足现有的需求,如果需要的话,还可以进一步扩展。 - **Number of physical and logical shards:** To keep Postgres humming and preserve RDS replication guarantees, we set an upper bound of 500 GB per table and 10 TB per physical database. We needed to choose a number of logical shards and a number of physical databases, such that the shards could be evenly divided across databases. 物理和逻辑分片的数量。为了保持Postgres的正常运转,并保持RDS的复制保证,我们设定的上限是每个表500GB,每个物理数据库10TB。我们需要选择逻辑分片的数量和物理数据库的数量,这样分片可以在数据库之间平均分配。 - **Number of instances:** More instances means higher maintenance cost, but a more robust system. 实例的数量。更多的实例意味着更高的维护成本,但系统更强大。 - **Cost:** We wanted our bill to scale linearly with our database setup, and we wanted the flexibility to scale compute and disk space separately. 成本。我们希望我们的账单能够随着数据库的设置而线性扩展,而且我们希望能够灵活地分别扩展计算和磁盘空间。 After crunching the numbers, we settled on an architecture consisting of **480 logical shards** evenly distributed across **32 physical databases**. The hierarchy looked like this: 经过计算,我们确定了一个由**480个逻辑分片组成的架构,均匀地分布在**32个物理数据库中。层次结构看起来像这样: - Physical database (32 total) 物理数据库(共32个) - Logical shard, represented as a Postgres [schema](https://www.postgresql.org/docs/current/ddl-schemas.html) (15 per database, 480 total) 逻辑碎片,表示为Postgres[模式](https://www.postgresql.org/docs/current/ddl-schemas.html)(每个数据库15个,共480个) - `block` table (1 per logical shard, 480 total) - `collection` table (1 per logical shard, 480 total) - `space` table (1 per logical shard, 480 total) - etc. for all sharded tables  We wen from a single database containing every table to fleet of 43 physicla databases, each containing 15 logical shards, each shard containing one of each sharded table. In total, we had 480 logical shards. 我们从包含每个表的单个数据库到包含 43 个物理数据库的队列,每个数据库包含 15 个逻辑分片,每个分片包含每个分片表中的一个。我们总共有 480 个逻辑分片。 We chose to construct `schema001.block`, `schema002.block`, etc. as **separate tables**, rather than maintaining a single [partitioned](https://www.postgresql.org/docs/10/ddl-partitioning.html) `block` table per database with 15 child tables. Natively partitioned tables introduce another piece of routing logic: 我们选择将`schema001.block`、`schema002.block`等构建为独立的表,而不是为每个数据库维护一个带有15个子表的分区块表。本机分区表引入了另一块路由逻辑。 1. Application code: workspace ID → physical database. 2. Partition table: workspace ID → logical schema. Keeping separate tables allowed us to route directly from the application to a specific database and logical shard. 保持独立的表使我们能够直接从应用程序路由到一个特定的数据库和逻辑分片。 ##### Migrating to shards Once we established our sharding scheme, it was time to implement it. For any migration, our general framework goes something like this: 1. **Double-write:** Incoming writes get applied to both the old and new databases. 双重写入。写入的数据会同时应用到新旧数据库。 2. **Backfill:** Once double-writing has begun, migrate the old data to the new database. 回填。一旦双重写入开始,将旧数据迁移到新数据库。 3. **Verification:** Ensure the integrity of data in the new database. 验证。确保新数据库中数据的完整性。 4. **Switch-over:** Actually switch to the new database. This can be done incrementally, e.g. double-reads, then migrate all reads.切换。实际切换到新的数据库。这可以循序渐进地进行,例如,先进行双读,然后迁移所有读数。 ##### Double-writing with an audit log The double-write phase ensures that new data populates both the old and new databases, even if the new database isn’t yet being used. There are several options for double-writing: 双重写入阶段确保新数据同时填充到新旧数据库中,即使新数据库还没有被使用。双重写入有几种选择: - **Write directly to both databases:** Seemingly straightforward, but any issue with either write can quickly lead to inconsistencies between databases, making this approach too flaky for critical-path production datastores. 直接向两个数据库写入。看上去很直接,但是任何一个写入的问题都会迅速导致数据库之间的不一致,这使得这种方法对于关键路径的生产数据集来说太不稳定。 - **Logical replication:** Built-in [Postgres functionality](https://www.postgresql.org/docs/10/logical-replication.html) that uses a publish/subscribe model to broadcast commands to multiple databases. Limited ability to modify data between source and target databases. 逻辑复制。内置的Postgres功能,使用发布/订阅模型来向多个数据库广播命令。在源数据库和目标数据库之间修改数据的能力有限。 - **Audit log and catch-up script:** Create an audit log table to keep track of all writes to the tables under migration. A catch-up process iterates through the audit log and applies each update to the new databases, making any modifications as needed. 审计日志和接续脚本。创建一个审计日志表,以记录所有对迁移中的表的写操作。一个追赶过程在审计日志中反复进行,并将每个更新应用到新的数据库中,根据需要进行任何修改。 We chose the **audit log** strategy over logical replication, since the latter struggled to keep up with `block` table write volume during the [initial snapshot](https://www.postgresql.org/docs/10/logical-replication-architecture.html#LOGICAL-REPLICATION-SNAPSHOT) step. 我们选择了审计日志策略而不是逻辑复制,因为后者在最初的快照步骤中很难跟上块表的写入量。 ##### Backfilling old data Once incoming writes were successfully propagating to the new databases, we initiated a backfill process to migrate all existing data. With all 96 CPUs (!) on the `m5.24xlarge` instance we provisioned, our final script took around three days to backfill the production environment. 一旦传入的写入数据成功传播到新的数据库,我们就启动回填程序,迁移所有现有的数据。在我们配置的 "m5.24xlarge "实例上有96个CPU(!),我们的最终脚本花了大约三天时间来回填生产环境。 Any backfill worth its salt should **compare record versions** before writing old data, skipping records with more recent updates. By running the catch-up script and backfill in any order, the new databases would eventually converge to replicate the monolith. 任何有价值的回填都应该在写入旧数据之前比较记录的版本,跳过最近更新的记录。通过运行追赶脚本和回填的任何顺序,新的数据库将最终收敛以复制单片机。 ##### Verifying data integrity Migrations are only as good as the integrity of the underlying data, so after the shards were up-to-date with the monolith, we began the process of **verifying correctness**. 迁移的好坏取决于底层数据的完整性,所以在碎片与单片机更新后,我们开始**验证正确性的过程。 - **Verification script:** Our script verified a contiguous range of the UUID space starting from a given value, comparing each record on the monolith to the corresponding sharded record. Because a full table scan would be prohibitively expensive, we randomly sampled UUIDs and verified their adjacent ranges. **验证脚本:**我们的脚本验证了UUID空间的连续范围,从一个给定的值开始,将单片上的每个记录与相应的分片记录进行比较。由于全表扫描的成本过高,我们随机抽出UUID并验证其相邻范围。 - **“Dark” reads:** Before migrating read queries, we added a flag to fetch data from both the old and new databases (known as [dark reading](https://slack.engineering/re-architecting-slacks-workspace-preferences-how-to-move-to-an-eav-model-to-support-scalability/)). We compared these records and discarded the sharded copy, logging discrepancies in the process. Introducing dark reads increased API latency, but provided confidence that the switch-over would be seamless. **"黑暗 "阅读:**在迁移阅读查询之前,我们添加了一个标志,从新旧数据库中获取数据(被称为[黑暗阅读](https://slack.engineering/re-architecting-slacks-workspace-preferences-how-to-move-to-an-eav-model-to-support-scalability/))。我们比较了这些记录并丢弃了分片的副本,在这个过程中记录了差异。引入暗读增加了API的延迟,但提供了切换将是无缝的信心。 As a precaution, the **migration and verification logic were implemented by different people**. Otherwise, there was a greater chance of someone making the same error in both stages, weakening the premise of verification. 作为预防措施,**迁移和验证逻辑是由不同的人实施的**。否则,有人在两个阶段都犯同样错误的可能性更大,削弱了验证的前提。 ##### Difficult lessons learned While much of the sharding project captured Notion’s engineering team at its best, there were many decisions we would reconsider in hindsight. Here are a few examples: 虽然sharding项目的大部分内容都体现了Notion工程团队的最佳状态,但事后我们会重新考虑许多决定。以下是几个例子: - **Shard earlier.** As a small team, we were keenly aware of the tradeoffs associated with premature optimization. However, we waited until our existing database was heavily strained, which meant we had to be very frugal with migrations lest we add even more load. This limitation kept us from using [logical replication](https://www.postgresql.org/docs/10/logical-replication.html) to double-write. The workspace ID —our partition key— was not yet populated in the old database, and **backfilling this column would have exacerbated the load** on our monolith. Instead, we backfilled each row on-the-fly when writing to the shards, requiring a custom catch-up script. 早期的分片。作为一个小团队,我们敏锐地意识到与过早优化相关的权衡。然而,我们一直等到我们现有的数据库变得非常紧张,这意味着我们必须非常节俭地进行迁移,以免增加更多的负载。这种限制使我们无法使用逻辑复制来进行双重写入。工作区ID--我们的分区密钥--在旧的数据库中还没有被填入,回填这一列会加剧我们单体上的负载。相反,我们在写到分片时即时回填了每一行,需要一个自定义的补给脚本。 - **Aim for a zero-downtime migration.** Double-write throughput was the primary bottleneck in our final switch-over: once we took the server down, we needed to let the catch-up script finish propagating writes to the shards. Had we spent another week optimizing the script to spend <30 seconds catching up the shards during the switch-over, it may have been possible to hot-swap at the load balancer level without downtime. 争取实现零停机时间的迁移。双重写的吞吐量是我们最后切换的主要瓶颈:一旦我们关闭服务器,我们需要让追赶脚本完成向分片的写的传播。如果我们再花一周时间优化脚本,使其在切换过程中花费<30秒的时间来追赶分片,那么就有可能在不停机的情况下在负载均衡器层面进行热交换。 - **Introduce a combined primary key instead of a separate partition key.** Today, rows in sharded tables use a composite key: `id`, the primary key in the old database; and `space_id`, the partition key in the current arrangement. Since we had to do a full table scan anyway, we could’ve combined both keys into a single new column, eliminating the need to pass `space_ids` throughout the application. 引入一个组合主键,而不是一个单独的分区键。今天,分片表中的行使用一个复合键:id,旧数据库中的主键;space_id,当前安排中的分区键。由于我们无论如何都要进行全表扫描,我们可以将这两个键合并为一个新的列,从而消除了在整个应用程序中传递space_ids的需要。 Despite these what-ifs, sharding was a tremendous success. For Notion users, a few minutes of downtime made the product tangibly faster. Internally, we demonstrated coordinated teamwork and decisive execution given a time-sensitive goal. 尽管有这些可能性,但分片是一个巨大的成功。对于Notion的用户来说,几分钟的停机时间让产品的速度明显提高。在内部,我们展示了协调的团队合作和对时间敏感的目标的果断执行。 ### Optimizing images on the web origin: [Optimizing images on the web](https://blog.cloudflare.com/optimizing-images/) ##### Dimensions [Since 2017, all modern browsers have supported the more dynamic `srcset` attribute](https://caniuse.com/srcset). This allows authors to set multiple image sources, depending on the matching media condition of the visitor’s browser: ```html <img srcset="hello_world_1500.jpg 1500w, hello_world_2000.jpg 2000w, hello_world_12000.jpg 12000w" sizes="(max-width: 1500px) 1500px, (max-width: 2000px) 2000px, 12000px" src="hello_world_12000.jpg" alt="Hello, world!" /> ``` ##### Format JPEG, PNG, GIF, WebP, and now, AVIF. AVIF is the latest image format with widespread industry support, and it often outperforms its preceding formats. **AVIF supports transparency with an alpha channel, it supports animations, and it is typically 50% smaller than comparable JPEGs** (vs. WebP's reduction of only 30%). ##### Quality For some uses, lossless compression might be appropriate, but for the majority of websites, speed is prioritized and this minor loss in quality is worth the time and bytes saved. Optimizing where to set the quality is a balancing act: too aggressive and artifacts become visible on your image; too little and the image is unnecessarily large. [Butteraugli](https://opensource.google/projects/butteraugli) and [SSIM](https://en.wikipedia.org/wiki/Structural_similarity) are examples of algorithms which approximate our perception of image quality, but this is currently difficult to automate and is therefore best set manually. In general, however, we find that around 85% in most compression libraries is a sensible default. ##### Markup All of the previous techniques reduce the number of bytes an image uses. This is great for improving the loading speed of those images and the Largest Contentful Paint (LCP) metric. However, to improve the Cumulative Layout Shift (CLS) metric, we must minimize changes to the page layout. This can be done by informing the browser of the image size ahead of time. 所有先前的技术都减少了图像使用的字节数。这对于提高这些图像的加载速度和最大内容绘制 (LCP) 指标非常有用。但是,要改进累积布局偏移 (CLS) 指标,我们必须尽量减少对页面布局的更改。这可以通过提前通知浏览器图像大小来完成。 On a poorly optimized webpage, images will be embedded without their dimensions in the markup. The browser fetches those images, and only once it has received the header bytes of the image can it know about the dimensions of that image. The effect is that the browser first renders the page where the image takes up zero pixels, and then suddenly redraws with the dimensions of that image before actually loading the image pixels themselves. This is jarring to users and has a serious impact on usability. 在优化不佳的网页上,图像将在标记中没有尺寸的情况下嵌入。浏览器获取这些图像,并且只有在收到图像的标头字节后才能知道该图像的尺寸。效果是浏览器首先渲染图像占据零像素的页面,然后在实际加载图像像素本身之前突然使用该图像的尺寸重新绘制。这对用户来说是刺耳的,并且对可用性有严重影响。 It is important to include dimensions of the image inside HTML markup to allow the browser to allocate space for that image before it even begins loading. This prevents unnecessary redraws and reduces layout shift. It is even possible to set dimensions when dynamically loading responsive images: by informing the browser of the height and width of the original image, assuming the aspect ratio is constant, it will automatically calculate the correct height, even when using a width selector. 在 HTML 标记中包含图像的尺寸非常重要,这样浏览器甚至可以在开始加载之前为该图像分配空间。这可以防止不必要的重绘并减少布局偏移。甚至可以在动态加载响应式图像时设置尺寸:通过通知浏览器原始图像的高度和宽度,假设纵横比不变,即使使用宽度选择器,它也会自动计算正确的高度。在 HTML 标记中包含图像的尺寸非常重要,这样浏览器甚至可以在开始加载之前为该图像分配空间。这可以防止不必要的重绘并减少布局偏移。甚至可以在动态加载响应式图像时设置尺寸:通过通知浏览器原始图像的高度和宽度,假设纵横比不变,即使使用宽度选择器,它也会自动计算正确的高度。 ```css <img height="9000" width="12000" srcset="hello_world_1500.jpg 1500w, hello_world_2000.jpg 2000w, hello_world_12000.jpg 12000w" sizes="(max-width: 1500px) 1500px, (max-width: 2000px) 2000px, 12000px" src="hello_world_12000.jpg" alt="Hello, world!" /> ``` Finally, lazy-loading is a technique which reduces the work that the browser has to perform right at the onset of page loading. By deferring image loads to only just before they're needed, the browser can prioritize more critical assets such as fonts, styles and JavaScript. By setting the `loading` property on an image to `lazy`, you instruct the browser to only load the image as it enters the viewport. For example, on an e-commerce site which renders a grid of products, this would mean that the page loads faster for visitors, and seamlessly fetches images below the fold, as a user scrolls down. This is [supported by all major browsers except Safari](https://caniuse.com/loading-lazy-attr) which currently has this feature hidden behind an experimental flag. 最后,延迟加载是一种减少浏览器在页面加载开始时必须执行的工作的技术。通过将图像加载推迟到需要它们之前,浏览器可以优先考虑更重要的资产,例如字体、样式和 JavaScript。通过将图像的加载属性设置为延迟,您可以指示浏览器仅在图像进入视口时加载图像。例如,在呈现产品网格的电子商务网站上,这意味着页面为访问者加载得更快,并在用户向下滚动时无缝获取折叠下方的图像。所有主要浏览器都支持此功能,但 Safari 目前将此功能隐藏在实验标志后面。 ```bash <img loading="lazy" … /> ```